According to the website, backups for Cloud Servers are only available for instances with a memory size of 2GB or less. However, this restriction only applies to (some of) the control panel, and not the API itself. It is still quite possible to take snapshots of your larger servers, though there are a few caveats along the way.

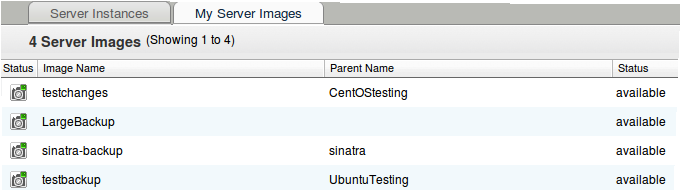

To take snapshots of your >2GB Cloud Servers through the control panel, you first need to go to Hosting -> Cloud Servers and click on the “My Server Images” tab. Note that you do not want to go to an individual server’s overview page to take the image; it will not work for >2GB servers.

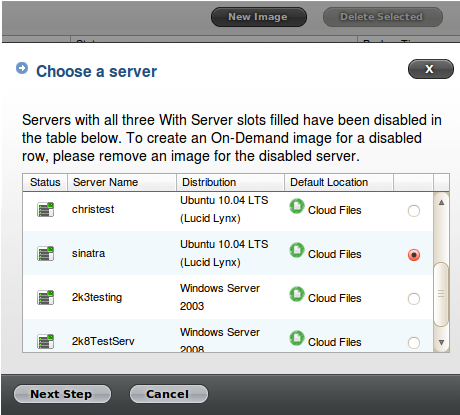

Just hit the “New Image” button on this page and you can select any of your servers from the list. In this case, I will take a backup of my 4GB server called “sinatra”.

Pick a name for your image (I called mine “sinatra-backup”), wait for it to complete, and voila: you have a backup of your >2GB server.

You can also create backups for >2GB servers directly through the API. You can use the script in this earlier Failverse blog post to create backups of your servers as well; simply run the script directly instead of putting it in a cron job.

Caveats

Although you can use the two aforementioned methods to create backups of >2GB servers, there are a number of restrictions still in place.

Linux:

- The total amount of disk space currently used cannot be higher than 75GB. You can check how much space you are using with the “df -h” command.

- The total number of inodes in use cannot exceed 3 million. You can check how many inodes are in use with the “df -i” command. If your inode usage is inordinately high, deleting unnecessary files (generally log files or stale connections are the culprit here) or temporarily zipping up multiple files into tarballs will reduce your usage.

- If it takes longer than 2 hours for the file transfer portion of the backup to finish, it will fail. This may occur if you have a large amount of both disk space and inodes in use, though not enough to trigger the hard caps. Reducing your inode usage can help reduce the time it takes to perform the backup.

Windows:

- The total size of the sparce disk file that your virtual hard disk resides upon cannot be larger than 160GB. What this means is that the highest amount of disk space in use at one time in your server’s history cannot have exceeded 160GB. If you were using more disk space than that in the past, you are basically SOL; the only way to make a backup image is to copy your data to a new server (assuming you have <160GB in use currently) and then take a snapshot of that new machine.

« Resizing Windows partitions after a Cloud Server RAM upgrade Compiling kernel modules for a Rackspace Cloud Server »

1 Trackback or Pingback for this entry:

[…] If you click in the right places, the Rackspace Cloud Control Panel will let you create backups for slices over 2GB. There is a pretty simple tutorial here. […]